PygmalionAI's large-scale inference engine

pygmalion.chat

It is designed to serve as the inference endpoint for the PygmalionAI website, and to allow serving the Pygmalion models to a large number of users with blazing fast speeds (thanks to vLLM's Paged Attention).

|

|

8 сар өмнө | |

|---|---|---|

| .github | 9 сар өмнө | |

| aphrodite | 8 сар өмнө | |

| assets | 10 сар өмнө | |

| cmake | 8 сар өмнө | |

| docker | 9 сар өмнө | |

| examples | 8 сар өмнө | |

| kernels | 8 сар өмнө | |

| rocm_patch | 10 сар өмнө | |

| tests | 8 сар өмнө | |

| .gitignore | 8 сар өмнө | |

| CMakeLists.txt | 9 сар өмнө | |

| LICENSE | 1 жил өмнө | |

| MANIFEST.in | 9 сар өмнө | |

| README.md | 9 сар өмнө | |

| build-linux-wheel.sh | 9 сар өмнө | |

| env.py | 9 сар өмнө | |

| environment.yaml | 9 сар өмнө | |

| formatting.sh | 9 сар өмнө | |

| mypy.ini | 8 сар өмнө | |

| patch_xformers.rocm.sh | 10 сар өмнө | |

| pyproject.toml | 8 сар өмнө | |

| requirements-common.txt | 8 сар өмнө | |

| requirements-cpu.txt | 9 сар өмнө | |

| requirements-cuda.txt | 9 сар өмнө | |

| requirements-dev.txt | 9 сар өмнө | |

| requirements-neuron.txt | 9 сар өмнө | |

| requirements-rocm.txt | 9 сар өмнө | |

| runtime.sh | 10 сар өмнө | |

| setup.py | 9 сар өмнө | |

| update-runtime.sh | 8 сар өмнө |

README.md

Breathing Life into Language

Aphrodite is the official backend engine for PygmalionAI. It is designed to serve as the inference endpoint for the PygmalionAI website, and to allow serving the Pygmalion models to a large number of users with blazing fast speeds (thanks to vLLM's Paged Attention).

Aphrodite builds upon and integrates the exceptional work from various projects.

The compute necessary for Aphrodite's development is provided by Arc Compute.

Features

- Continuous Batching

- Efficient K/V management with PagedAttention from vLLM

- Optimized CUDA kernels for improved inference

- Quantization support via AQLM, AWQ, Bitsandbytes, EXL2, GGUF, GPTQ, QuIP#, Smoothquant+, and SqueezeLLM

- Distributed inference

- Variety of sampling methods (Mirostat, Locally Typical Sampling, Tail-Free Sampling, etc)

- 8-bit KV Cache for higher context lengths and throughput, at both FP8 and INT8 formats.

Quickstart

pip install aphrodite-engine

python -m aphrodite.endpoints.openai.api_server --model PygmalionAI/pygmalion-2-7b

[!CAUTION] If the installation reports CUDA kernel errors, please run

pip install aphrodite-engine=0.4.5instead.

This will create a OpenAI-compatible API server that can be accessed at port 2242 of the localhost. You can plug in the API into a UI that supports OpenAI, such as SillyTavern.

You can play around with the engine in the demo here:

Docker

Additionally, we provide a Docker image for easy deployment. Here's a basic command to get you started:

sudo docker run -d -e MODEL_NAME="mistralai/Mistral-7B-Instruct-v0.2" -p 2242:7860 --gpus all --ipc host alpindale/aphrodite-engine

This will pull the Aphrodite Engine image (~9GiB download), and launch the engine with the Mistral-7B model at port 2242. Check here for the full list of env variables.

See here for the Compose file to use with Docker Compose.

Requirements

- Operating System: Linux (or WSL for Windows)

- Python: at least 3.8

For windows users, it's recommended to use tabbyAPI instead, if you do not need batching support.

Build Requirements:

- CUDA >= 11

For supported GPUs, see here. Generally speaking, all semi-modern GPUs are supported - down to Pascal (GTX 10xx, P40, etc.)

Installation

Usage

For usage, please refer to the wiki page for detailed instructions. Aphrodite provides many different options for LLM inference, so please read through the list of options here.

Performance

Speeds vary with different GPUs, model sizes, quantization schemes, batch sizes, etc. Here are some baseline benchmarks conducted by requesting as many completions as possible from the API server.

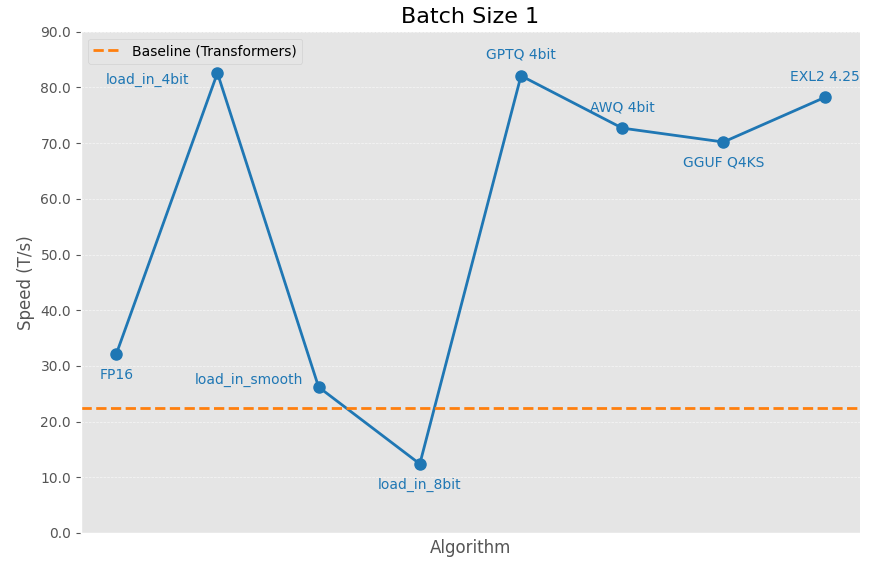

Batch Size 1 Performance

These are the speeds a user would normally get if they request a single output with a sizable prompt and output length. Essentially, normal chatting experience.

The following results were gathered by sending a request with 8192 prompt tokens and requesting 1024 tokens with ignore_eos=True.

GPU: NVIDIA A40, Mistral 7B. Baseline is the same model loaded with text-generation-webui in FP16.

High Batch Size Performance

[!NOTE]

The numbers below are the theoretical peak achieved by only requesting output tokens at very high batch sizes. At lower batch sizes with much larger prompts, the results will be vastly different. Throughput refers to output tokens per second.

This table is outdated, will be replaced soon.

| Model | Quantization | bits | GPU | Throughput (T/s) |

|---|---|---|---|---|

| Mistral 7B | None | 16 | RTX 4090 | 5489.3 |

| AWQ | 4 | RTX 4090 | 4078.8 | |

| GPTQ | 4 | RTX 4090 | 7850.4 | |

| 8 | RTX 4090 | 7658.0 | ||

| GGUF | Q8 | RTX 4090 | 5141.2 | |

| Q6KM | RTX 4090 | 5791.7 | ||

| Q5KM | RTX 4090 | 5786.2 | ||

| Q4KM | RTX 4090 | 5815.8 | ||

| SqueezeLLM | 4 | RTX 4090 | 549.5 | |

| Llama-2 7B | None | 16 | RTX 4090 | 2576.2 |

| AWQ | 4 | RTX 4090 | 3551.3 | |

| GPTQ | 4 | RTX 4090 | 2919.1 | |

| GGUF | Q4KM | RTX 4090 | 2726.6 | |

| Q5KM | RTX 4090 | 2763.4 | ||

| Q6KM | RTX 4090 | 2694.7 | ||

| Q8 | RTX 4090 | 2647.0 | ||

| SqueezeLLM | 4 | RTX 4090 | 580.3 |

Notes

By design, Aphrodite takes up 90% of your GPU's VRAM. If you're not serving an LLM at scale, you may want to limit the amount of memory it takes up. You can do this in the API example by launching the server with the

--gpu-memory-utilization 0.6(0.6 means 60%).You can view the full list of commands by running

python -m aphrodite.endpoints.openai.api_server --help.Context Length extension via the RoPE method is supported for most models. Use the command-line flag

--max-model-lento specify a desired context length and the engine will adjust the RoPE scaling accordingly.Please refer to the FAQ & Issues if you run into problems. If you don't find an answer there, please make an issue.

Acknowledgements

Aphrodite Engine would have not been possible without the phenomenal work of other open-source projects. Credits go to:

- vLLM (CacheFlow)

- TensorRT-LLM

- xFormers

- AutoAWQ

- AutoGPTQ

- SqueezeLLM

- Exllamav2

- TabbyAPI

- AQLM

- KoboldAI

- Text Generation WebUI

- Megatron-LM

- Ray

Contributing

Everyone is welcome to contribute. You can support the project by opening Pull Requests for new features, fixes, or general UX improvements.